Posted in: Movies | Tagged: ai, artificial intelligence

Scientist to Hollywood: Artificial Intelligence Doesn't Work the Way You Think it Does

As movie audiences anticipate the return of Arnold Schwarzenegger to his signature role as the original Terminator in November — yes, he WILL be back — scientist and rising star in the Artificial Intelligence world Matt Allen has a few thoughts for filmmakers about how AI is depicted in popular culture.

His main point is "You're getting it all wrong."

"Machine Learning is a popular buzzword, and a powerful tool," said Allen, who has co-authored two articles on AI for the American Chemical Society Nano and Nature Biomedical Engineering, including one on how AI can be used to help detect cancer early in patients who may not even be showing symptoms. "However, no matter how you slice it, machines do not learn. Machine Learning (ML) was named as such in the pursuit of machines that could learn like humans. The furthest we have come in that regard is an optimization procedure wherein at each step during the "training process" a "model" gets slightly better at whatever the task is. This procedure is definitively not "learning". The incremental improvements made by a model may seem intuitively similar to the incremental improvements that humans make when learning to do something new, but the similarities end there."

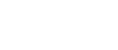

Allen continued that, in his experience, pop culture representations of AI fall into one of two camps: human-level AI is a robot which is indistinguishable from an actual human, or human-level AI is a scary killer death machine which is trying to destroy all humans for some reason.

"Whether or not either of those things could become a reality is not clear, but for the moment they are not," he said. "Real AI is the thing that makes algorithms seem limitless. It is the thing that enables computers to see through a camera, or for a robot to manipulate complex objects. Real AI is what enables algorithms to be made for things like identifying what items need restocking in stores, who is at the door, whether you should change lanes, or what a black and white image might look like in color. It is one key interface between the direct specificity that algorithms require, and the indirect abstractions which humans are constantly surrounded by. The modern applications for real AI are many, and all of them are very exciting, but none of them have to do with human-level intelligence."

Another misnomer, according to Allen, is the use of the term "Neural Network" to describe how a fictional android can achieve human-level intelligence and cognition.

"I often see the statement 'Neural Networks are modeled after the human brain' thrown around," he added. "This is not at all an accurate depiction of what a Neural Network really is. Using the term 'biologically inspired' is applicable, but only in an extremely loose sense. A Neural Network (NN) is a bunch of 'nodes' which are connected by 'weights'. The output of a node is nearly as simple as the weighted sum of its inputs. This seems roughly similar to neurons in the brain, both things could be considered nodes which have some number of incoming connections and produce some kind of output. However, the actual function of axons and neurons in the brain is extremely complex – far more so than just a weighted sum – and is not yet fully understood. Despite our incomplete understanding of the brain, it's clear to see that the functions of the brain are so vastly more sophisticated than the functions of any NN as to be incomparable."

Finally, he believes it is critical for people to understand that AI is not a completely independent process. It cannot exist, learn or improve without a human being guiding the experience.

"There is a huge amount of debate over how far away human-level AI could be," he said. "I hold the view that people are much more optimistic about it than they should be. There are no words that I could use which would accurately describe how unimaginably complicated human intelligence is. The recent advances in ML and AI in general are very exciting, and the world has changed because of them. However, human-level AI is not even remotely close to what we have right now. It may be attainable, but it is not yet within the scope of what ML can accomplish."

So don't worry. Skynet is not poised to take over, and the machines are nowhere near being able to take over — unless, of course, there is a human behind the scenes pressing the buttons.